Exploring emerging remote and proximal sensing technologies for novel applications in crop protection is a nascent area of research at the James Hutton Institute that has the potential to produce many powerful tools for inclusion in Integrated Pest and Disease Management programmes. The rationale that underpins this area of research is that the properties of the light (and other electromagnetic waves) reflected by plant surfaces is dependent on leaf surface attributes, internal structures and biochemical components, which are all influenced in distinctive ways by crop genotype as well as nutritional and disease status. At the James Hutton Institute we have conducted several research projects that explored various remote and proximal sensing technologies for potential applications to IPM.

The continuing rise in the availability of unmanned aerial vehicle (UAV) technology, combined with sensors that can measure crop reflectance in wavelengths beyond the visible light spectrum, presents the possibility to use automated image analysis to inform crop disease management decision support systems.

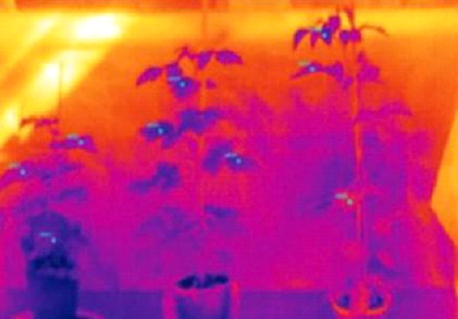

A mobile field phenotyping platform integrating thermal, visual, near and short wave infra-red sensors/cameras to measure changes in canopy temperature and leaf spectral properties has been developed. This is being used to investigate signatures of plant stress associated with early disease development under field conditions, which will allow the utility of imaging as a tool for high throughput detection and diagnosis of biotic stress to be evaluated.